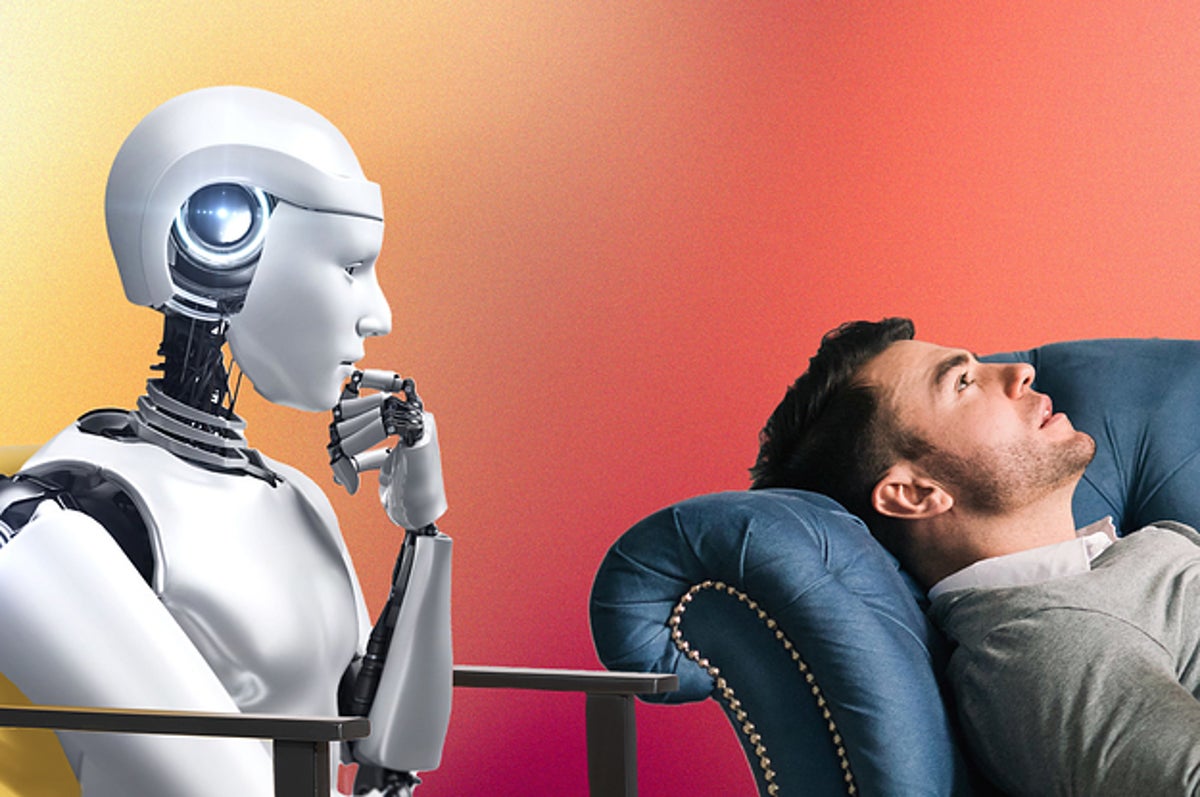

AI in psychotherapy

July 07, 2023The potential impact of artificial intelligence (AI) on various aspects of our lives is a hot topic these days. From college term papers written by AI models to AI-generated art, it seems that AI is making its way into different fields. One area where AI is being explored is in mental health services. AI chatbots are being considered as potential providers of mental health support, especially in situations where there's a shortage of human therapists, such as in the VA system.

There are even discussions about using AI as a "tripsitter" for therapies involving substances like ketamine or psilocybin. Companies are exploring the development of sophisticated digital therapeutics to accompany these therapies. The idea behind AI therapy is to replace human therapists with computer algorithms that possess advanced language-processing capabilities. While these AI models have come a long way from the early attempts of replicating therapy in the 1960s, something seems to be missing.

In the world of fiction, Philip K Dick's novel "Do Androids Dream of Electric Sheep?" (which was adapted into the film "Blade Runner") introduced the concept of an empathy test to differentiate between humans and androids. This highlights the challenge of creating human-like robots or AI systems that don't trigger feelings of unease or revulsion, a phenomenon known as "The Uncanny Valley.

As we explore the use of AI in therapy, there are several important questions to consider. Who owns the private information shared with therapy chatbots? While human therapists are bound by privacy laws, can the same be said for AI systems when users mindlessly agree to terms and conditions? Social media has shown that people are willing to sacrifice personal information for connectivity, but does this mean that AI therapy will create a divide where those who can afford traditional therapy will pay for it, while others settle for AI therapy that trades their personal data for marketing purposes? All these questions need to be addressed.

Although AI may raise privacy concerns, it's also possible that it could improve psychotherapy in terms of accessibility, treatment consistency, and the ability to collect valuable data on what works in therapy. Traditional therapy has been challenging to study due to differences in how it's delivered by practitioners. An AI software developer might be able to program AI to adapt to a client's needs in a way that a human therapist cannot.

However, the idea of AI therapy also raises uneasiness. Therapy has always been a deeply human experience, where transference, cognitive distortions, and the regulation of the autonomic nervous system through facial expressions play significant roles. While teletherapy poses challenges in these areas, it seems impossible for an AI algorithm to replicate the human-to-human connection and the ability to co-regulate, adding psychotherapy as one of the jobs AI can’t replace.

Therapy is not just about the words spoken; it's about the interpersonal dynamics and meta-layers that exist within the therapeutic relationship. For individuals with social anxiety or agoraphobia, the act of leaving the house and engaging with another person can be a valuable form of exposure therapy. Similarly, the physical presence of a patient at a clinic can serve as behavioural activation for someone with depression. While teletherapy and AI may reduce barriers to treatment, they cannot fully replace these essential aspects of psychotherapy.